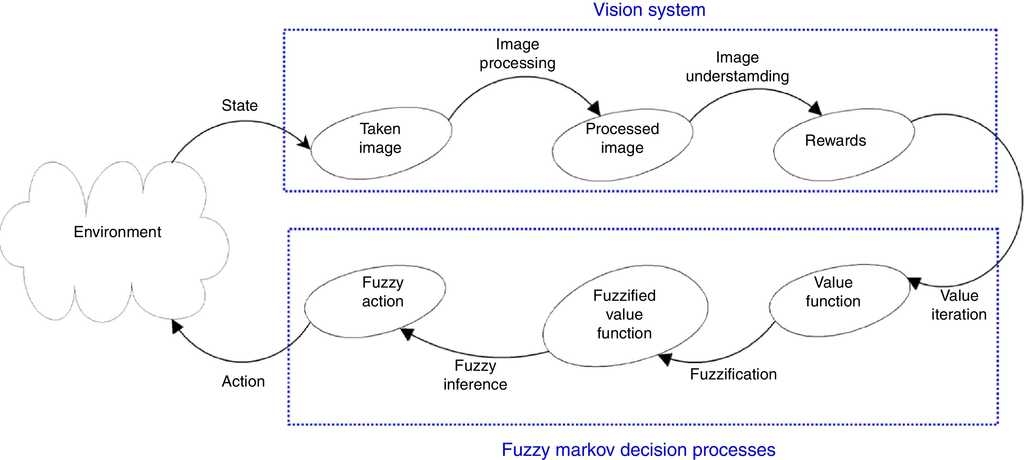

Applied Sciences | Free Full-Text | Decision Making with STPA through Markov Decision Process, a Theoretic Framework for Safe Human-Robot Collaboration

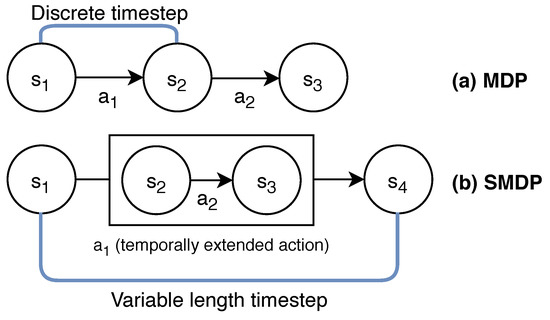

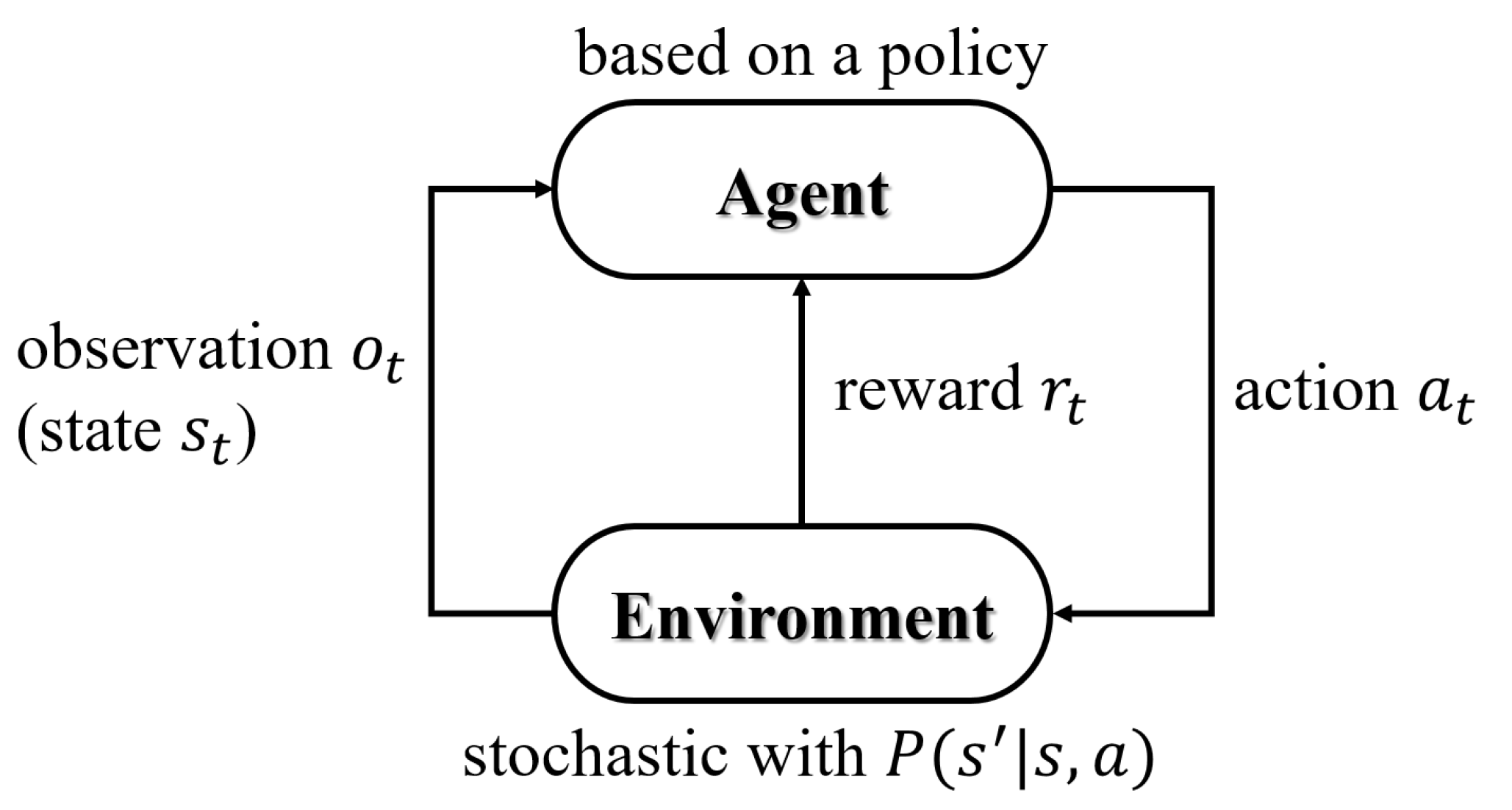

The Five Building Blocks of Markov Decision Processes | by Wouter van Heeswijk, PhD | Towards Data Science

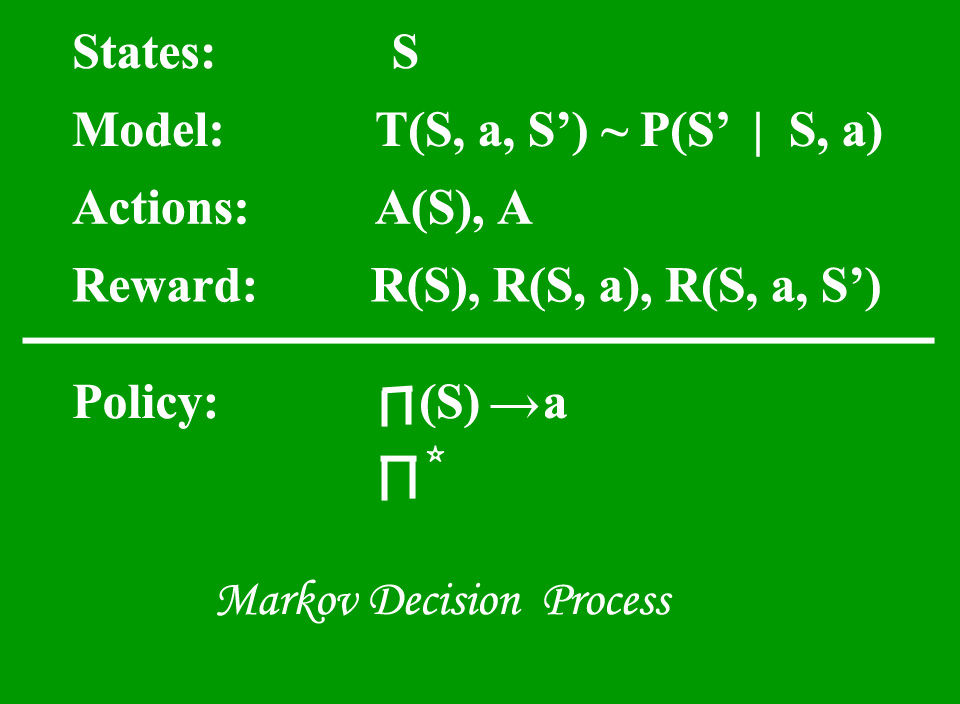

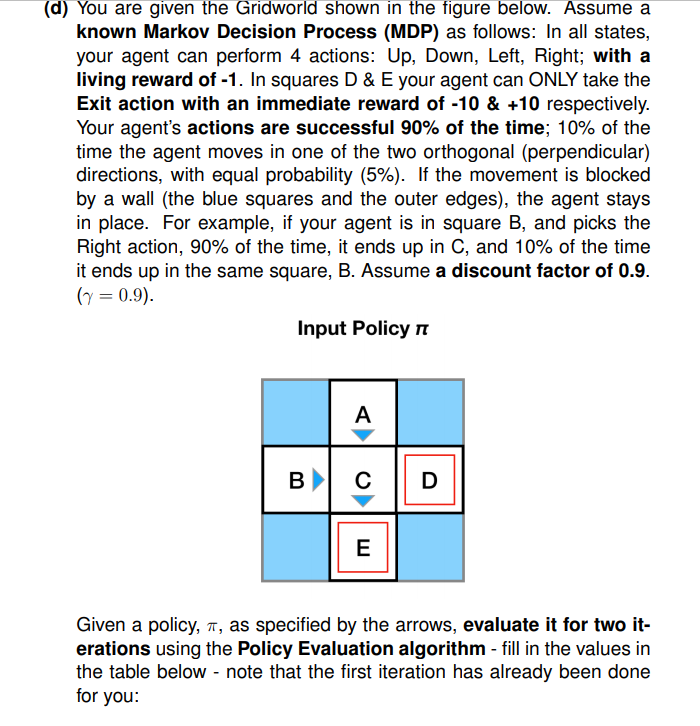

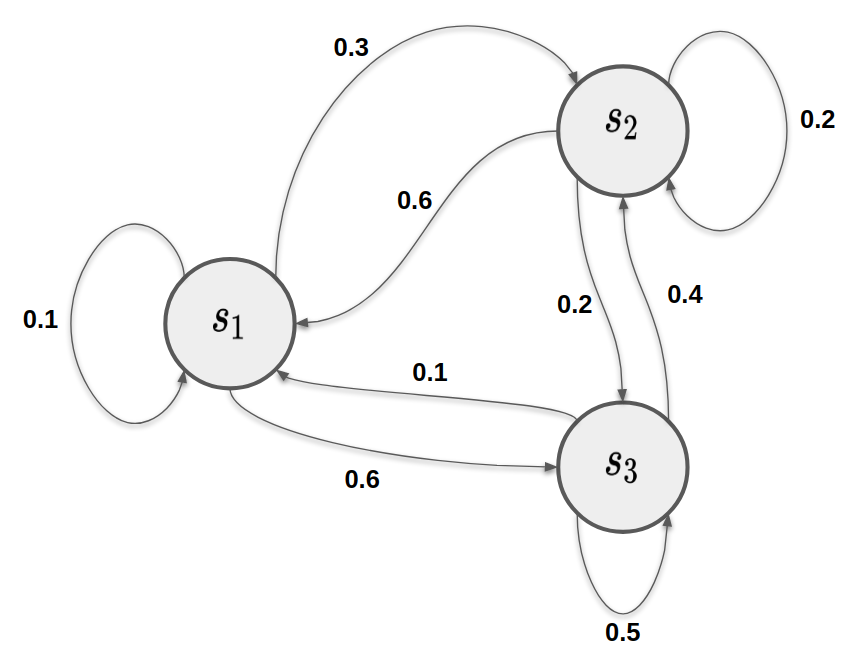

Finite Markov Decision Processes. This is part 3 of the RL tutorial… | by Sagi Shaier | Towards Data Science

Applied Sciences | Free Full-Text | Motion Planning of Robot Manipulators for a Smoother Path Using a Twin Delayed Deep Deterministic Policy Gradient with Hindsight Experience Replay

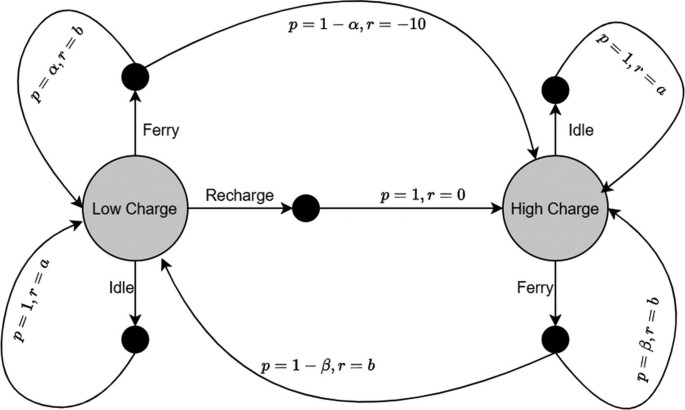

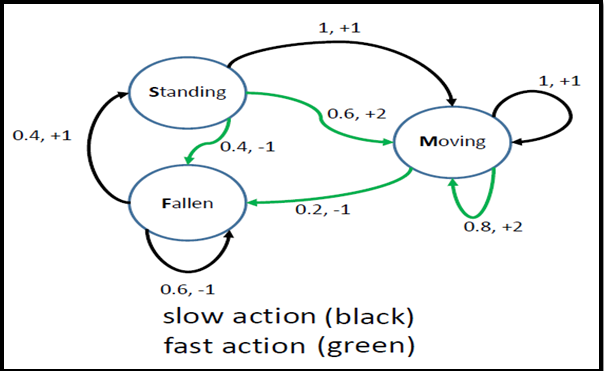

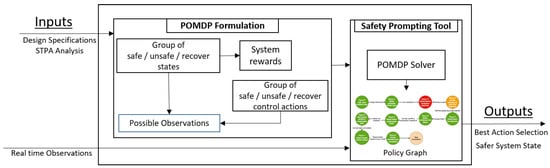

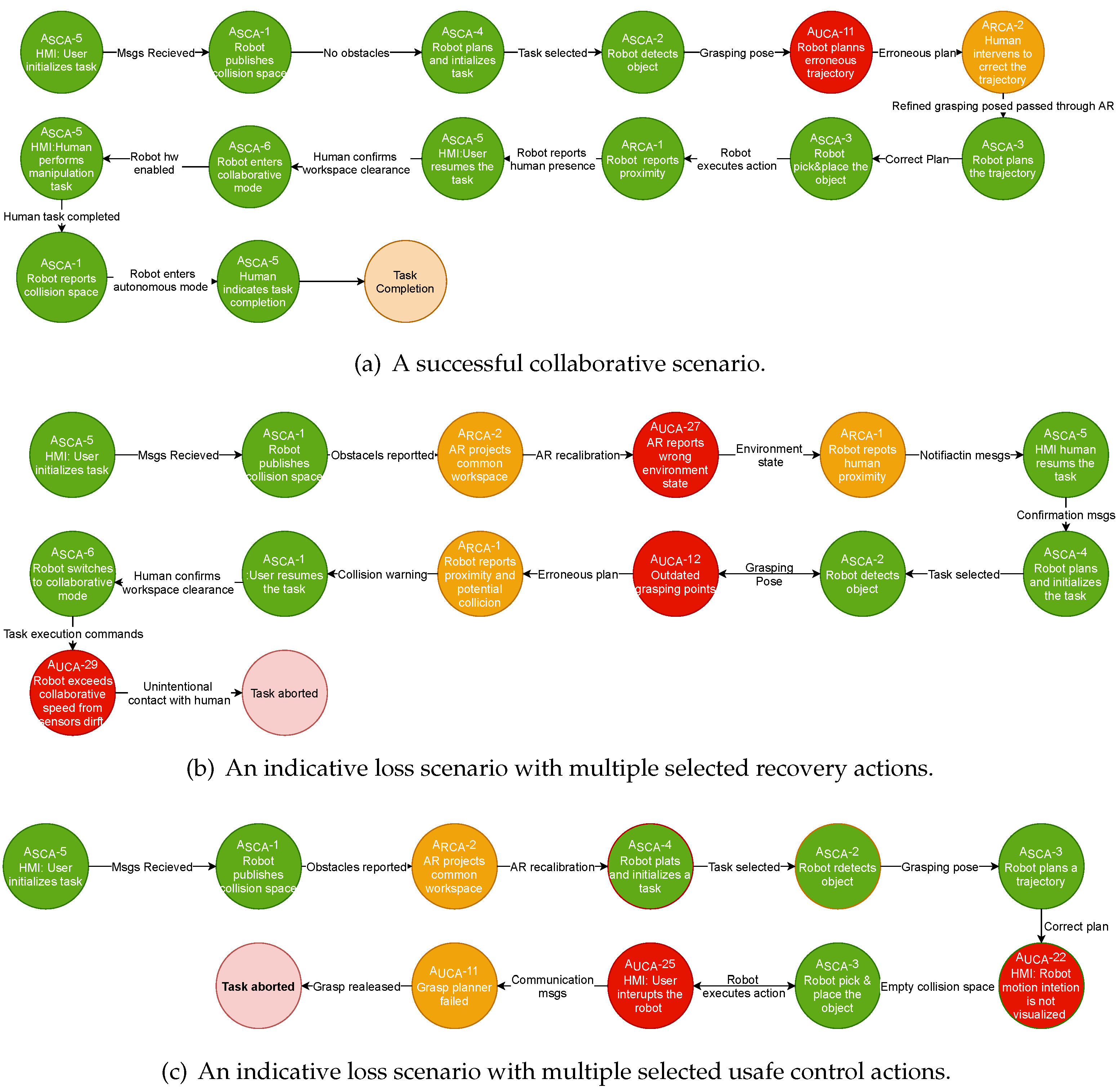

Applied Sciences | Free Full-Text | Decision Making with STPA through Markov Decision Process, a Theoretic Framework for Safe Human-Robot Collaboration

MAKE | Free Full-Text | Recent Advances in Deep Reinforcement Learning Applications for Solving Partially Observable Markov Decision Processes (POMDP) Problems: Part 1—Fundamentals and Applications in Games, Robotics and Natural Language Processing

Applied Sciences | Free Full-Text | Decision Making with STPA through Markov Decision Process, a Theoretic Framework for Safe Human-Robot Collaboration

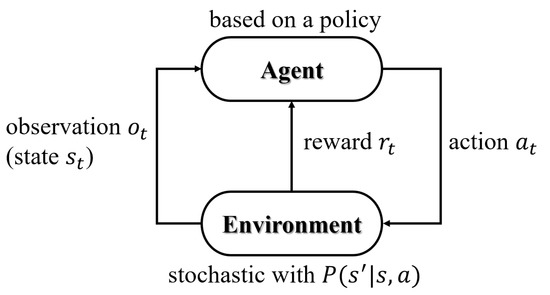

Reinforcement Learning and the Markov Decision Process | by Sebastian Dittert | Analytics Vidhya | Medium

Applied Sciences | Free Full-Text | Decision Making with STPA through Markov Decision Process, a Theoretic Framework for Safe Human-Robot Collaboration